- Blog

- How to use vidbox

- Torrent download vray sketchup 2016

- Crack wincc flexible 2008 sp2

- Xeon xbox emulator download

- Roku emulator apk

- Create bootable usb os x lion

- World conqueror 4 mod android big map

- The innkeepers rotten tomatoes

- 555 timer pspice model library

- Sketchup pro 2015 promo code

- Spbu pertalite

- The mavericks greatest hits cd

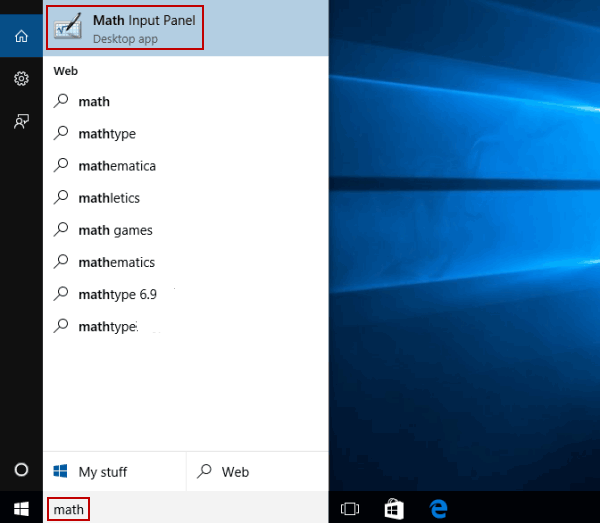

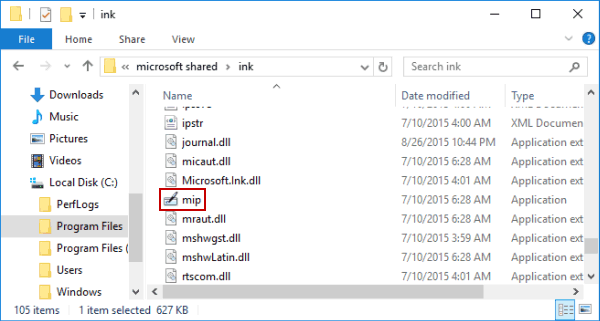

- How to use math input panel in gmath

- Psx emulator ps2

- Planet coaster water park

investigated how pretrained language model like BERT can predict (the discretizedversion of) the attribute with continuous numeric values such as MASS or PRICE with evaluation with DoQ. Typically, the models are enhanced with the ability to predict numbers by, simply using masked language models by replace the numbers to be predicted with tokens, or by adding numeracy inference modules into language models or by fine-tuning setting where output is a discretizedversions of target numeracy. Using such pretrained language model to predict or assess the numeracy in documents is an emerging trend. Several BERT models pretrained on a huge size of text data are available to the public. Table 2 summarizes these approaches and Table 3 shows the existing data sets. They explored co-occurrence of words and numeracy in large Web data. It contains ten dimensions (TIME, CURRENCY, LENGTH, AREA, VOLUME, MASS, TEMPERATURE, DURATION SPEED, VOLTAGE) for various kinds of words including nouns, adjectives, and verbs. Recently, a large dataset called Distribution over Quantities (DoQ), was provided by Elazar et al. proposed to obtain numerical common sense by searching numerical expressions in Web corpus, and calculating distribution of numbers given contexts that are given syntactically such as “verb=give, subj=he, …” and predict labels for given numbers in text, such as small, normal, large. Takamura and Tsujii took similar approach by using Web search for linguistic patterns e.g., “the size of A”, but they enhanced their patterns with more indirect clues such as WordNet relations, n-gram corpus for the explicit patterns, e.g., “A is longer than B”, and implicit patterns, e.g., “put A in B”, through a machine learning approach to determine their weights. Davidov and Rappoport proposed similar approach but augment their method by obtaining terms similar to given object using the Web and WordNet. proposed to use knowledge obtained using these patterns with object detection from images to achieve more reliable object size knowledge. proposed to obtain physical size of entities by using Web search with patterns like “book (*cm x *cm)”. In this survey, we try to provide a quick overview of the history and recent advances of this research field ranging from traditional tasks like information retrieval to emerging ones such as numerical reading comprehension.Īramaki et al. However, recently more and more research studies are proposed on this area partly due to the recent advance of deep neural network-based language modeling. In contrast to such grounding-type research, studies on mining numerals explicitly written in text have been getting little attention.

Even joint learning of texts and images can be seen as mining relations between texts and associated RGB data. Location-aware text mining can be considered as mining of association rules between words and positional data (i.e., longitude and latitude). For example, predicting stars given with product review texts is typical task of such research areas. Jointly mining texts and their associated numerical metadata has many variations and many studies have been proposed. Numerals are an important form of data in such non-word data not only because many documents are accompanied with related metadata such as publish dates expressed in the form of numbers, but also because the document themselves contain numerals such as “three people”, “500 dollars”, and “90 cm.” In some cases, texts are not understandable in their closed form, i.e., without understanding the data other than the words. Natural language processing (NLP) is a research field to make machines understand the meaning of a text data, which is a typically a list of words.